中国激光, 2020, 47 (4): 0410001, 网络出版: 2020-04-09

道路三维点云多特征卷积神经网络语义分割方法  下载: 1529次

下载: 1529次

Multi-Feature 3D Road Point Cloud Semantic Segmentation Method Based on Convolutional Neural Network

遥感 神经网络 激光点云 语义分割 多特征 点云投影 remote sensing neural network laser point cloud semantic segmentation multi-feature point cloud projection

摘要

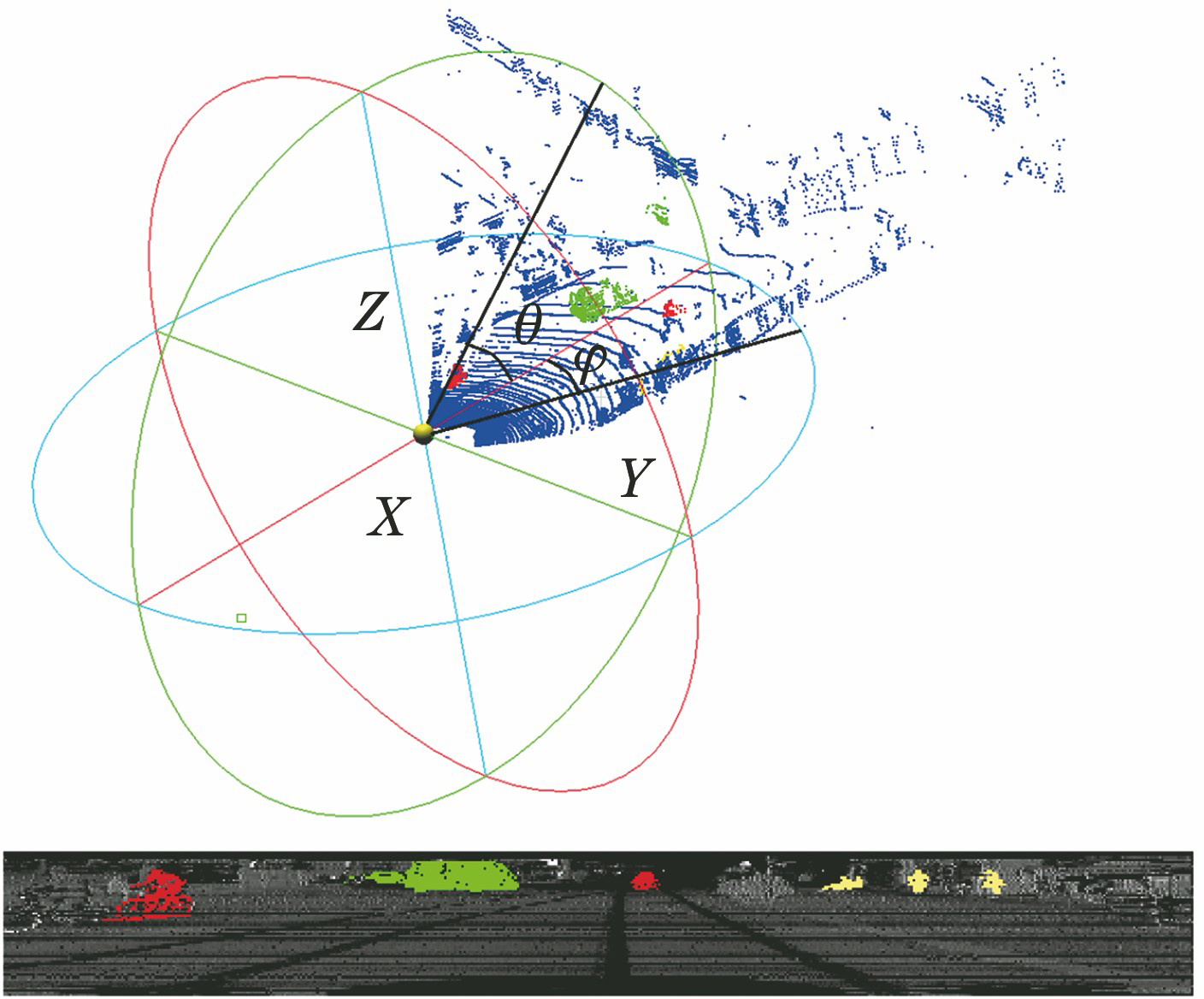

针对道路场景下三维激光点云语义分割精度低的问题,提出了一种基于卷积神经网络并结合几何点云多特征的端到端的语义分割方法。首先,通过球面投影构造出点云距离、相邻夹角及表面曲率等特征图像,以便于应用卷积神经网络;接着,利用卷积神经网络对多特征图像进行语义分割,得到像素级的分割结果。所提方法将传统点云特征融入到卷积神经网络中,提升了语义分割效果。使用KITTI点云数据集进行测试,结果表明:所提三维点云多特征卷积神经网络语义分割方法的效果优于SqueezeSeg V2等没有结合点云特征的语义分割方法;与SqueezeSeg V2网络相比,所提方法对车辆、自行车和行人分割的精确率分别提高了0.3、21.4、14.5个百分点。

Abstract

Aiming at the problem of low accuracy in semantic segmentation of three-dimensional laser point clouds in road scene, an end-to-end multi-feature point clouds semantic segmentation method based on convolutional neural network is proposed. Firstly, the feature images such as point cloud distance, adjacent angle and surface curvature are calculated based on spherical projection to apply to convolutional neural network; then, a convolutional neural network is adopted to process multi-band depth images to obtain pixel-level instance segmentation results. The proposed method combines traditional point cloud features with the deep learning method to improve the result of point cloud semantic segmentation. Using KITTI point cloud data set test, simulation results show that the multi-feature convolutional neural network semantic segmentation method has better performance than other semantic segmentation methods without combining with point cloud features such as SqueezeSeg V2. The precision obtained with proposed method for car, bicycle and pedestrian segmentation is 0.3, 21.4, 14.5 percentage points higher in comparison with the SqueezeSeg V2 network.

张爱武, 刘路路, 张希珍. 道路三维点云多特征卷积神经网络语义分割方法[J]. 中国激光, 2020, 47(4): 0410001. Zhang Aiwu, Liu Lulu, Zhang Xizhen. Multi-Feature 3D Road Point Cloud Semantic Segmentation Method Based on Convolutional Neural Network[J]. Chinese Journal of Lasers, 2020, 47(4): 0410001.