光学学报, 2020, 40 (1): 0111021, 网络出版: 2020-01-06

利用光场合成与弥散圆渲染的单幅图像重聚焦  下载: 1652次

下载: 1652次

Single-Image Refocusing Using Light Field Synthesis and Circle of Confusion Rendering

图 & 表

图 2. 光场数字重聚焦。(a)传感器图像;(b)子孔径图像;(c)重聚焦图像

Fig. 2. Light field digital refocusing. (a) Sensor image; (b) sub-aperture image; (c) refocused image

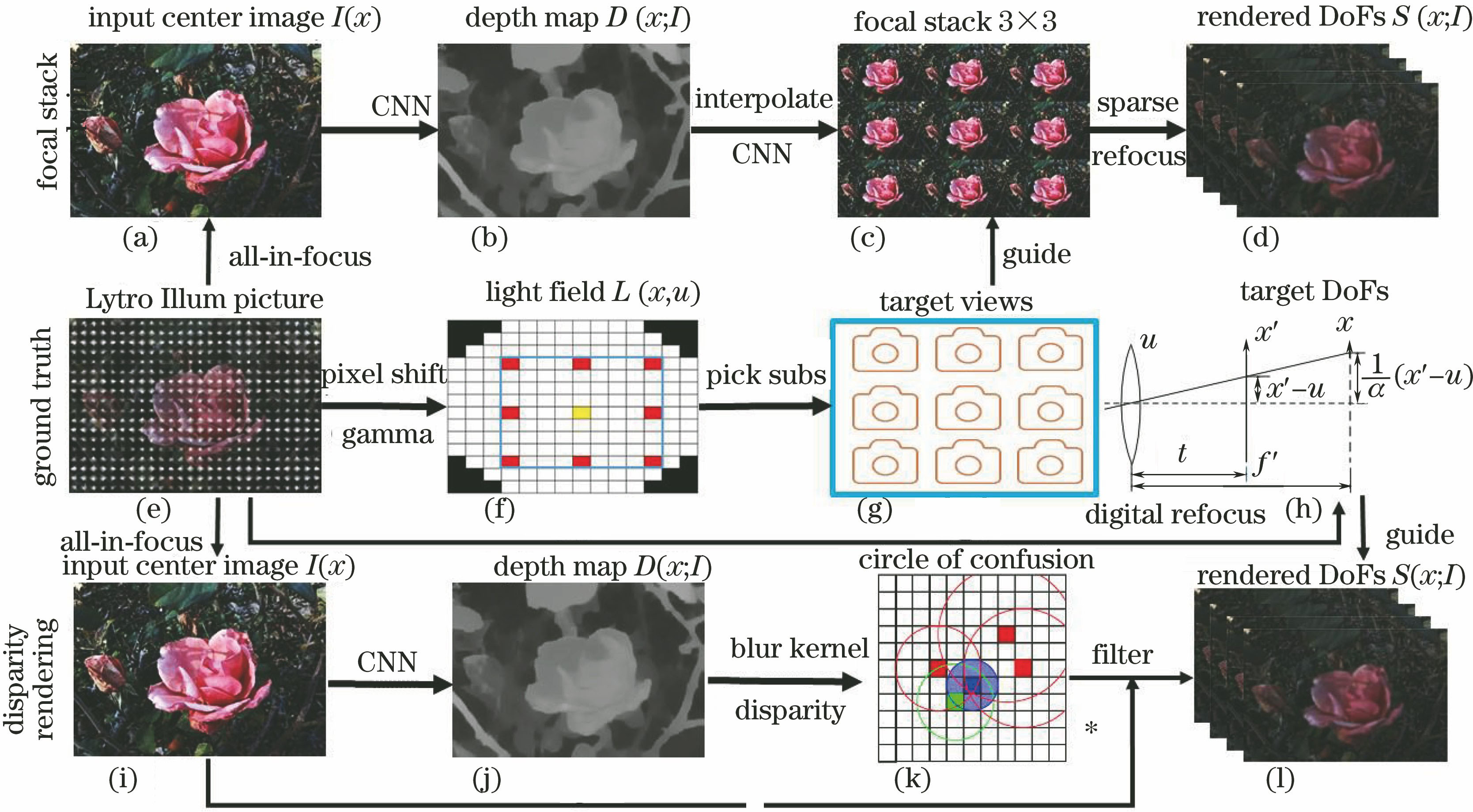

图 4. 深度网络架构。(a)基于聚焦堆栈的方法;(b)基于视差的方法

Fig. 4. Deep network architecture. (a) Focused stack based method; (b) parallax based method

图 5. 单目深度估计方法结果比较。(a)中心图像;(b)视差方法;(c)聚焦堆栈方法;(d) Godard等[16]的方法;(e) Cheng等[17]的归一化方法(SSIM:0.756);(f)归一化的视差方法(SSIM:0.823);(g)归一化的聚焦堆栈方法(SSIM:0.895);(h) Jeon等[18]的方法

Fig. 5. Comparison of monocular depth estimation results. (a) Center image; (b) disparity method; (c) focal stack method; (d) method proposed by Godard et al.[16]; (e) normalized method proposed by Cheng et al.[17](SSIM:0.756); (f) normalized disparity method (SSIM:0.823); (g) normalized focal stack method (SSIM: 0.895); (h) method proposed by Jeon et al.[18]

图 6. 光场合成方法结果定性与定量对比。(a) Kalantari等[10]的方法;(b) Srinivasan等[13]的方法;(c)本文方法;(d)真实中心图像;(e)(f)(g) Kalantari等[10]的方法、Srinivasan等[13]的方法和本文方法渲染的中心图像

Fig. 6. Quantitative and qualitative comparison of light field synthesis methods. (a) Method proposed by Kalantari et al.[10]; (b) method proposed by Srinivasan et al.[13]; (c) our method; (d) ground truth center image; (e)(f)(g) rendered center images obtained by methods proposed by Kalantari et al.[10] and Srinivasan et al.[

图 7. 渲染方法定性与定量对比。(a)中心图像;(b1)~(b4)重聚焦图像;(c)聚焦堆栈方法深度估计;(d1)~(d4)聚焦堆栈方法的渲染结果;(e)视差方法深度估计;(f1)~(f4)视差方法的渲染结果;(g)(h)(i) Zhang等[5]的方法,分别为遮挡物检测、前景聚焦、背景聚焦;(j)(k) Wang等[19]的方法,分别为深度为3、SSIM为0.902、PSNR为32.80和深度为3、SSIM为916、PSNR为33.28

Fig. 7. Quantitative and qualitative comparison of rendering methods. (a) Center image; (b1)--(b4) refocused images; (c) depth estimation using focal stack method; (d1)--(d4) rendering results using focal stack method; (e) depth estimation using disparity method; (f1)--(f4) rendering results using disparity method; (g)(h)(i) results of occlusion detection, focus on foreground, and focus on background obtained by method proposed by Zhang et al.[5]; (j)(k)

图 8. 不同数据集渲染结果的对比。(a)真实中心图像;(b)深度估计;(c)重聚焦于较近位置;(d)重聚焦于较远位置

Fig. 8. Comparison of rendering results on different datasets. (a) Ground truth center image; (b) depth estimation; (c) refocusing on close positions; (d) refocusing on far positions

图 9. 真实场景下的渲染效果与不同相机获取的图像对比。(a)原图;(b)~(e)本文方法渲染的四个不同深度的重聚焦图像;(f)深度图;(g)(h)双摄像头聚焦于两个位置的成像; (i)(j) Cannon相机聚焦于两个位置的成像

Fig. 9. Comparison of rendering effects of real scenes with images captured by different cameras. (a) Original image; (b)--(e) refocused images at four depths rendered by our method;(f) depth map; (g)(h) images shot by dual cameras focused on two positions; (i)(j) images shot by Cannon focused on two positions

表 1光场数据集参数

Table1. Parameters for light field datasets

|

表 2光场数据集渲染效果定量分析

Table2. Quantitative analysis on rendering effects on light field datasets

|

王奇, 傅雨田. 利用光场合成与弥散圆渲染的单幅图像重聚焦[J]. 光学学报, 2020, 40(1): 0111021. Qi Wang, Yutian Fu. Single-Image Refocusing Using Light Field Synthesis and Circle of Confusion Rendering[J]. Acta Optica Sinica, 2020, 40(1): 0111021.